Muhammad Hasan Ferdous

(he/him)

Ph.D. Candidate in Information Systems

Professional Summary

I am a Ph.D. candidate in Information Systems at UMBC specializing in causal AI, temporal causal discovery, and robust analysis of complex multivariate time series. My research focuses on developing methods that remain reliable under autocorrelation, non-stationarity, latent structure, and irregular sampling, with applications in healthcare, climate analytics, and cybersecurity.

I have contributed several frameworks to the field, including CDANs, eCDANs, DCD (Decomposition-based Causal Discovery), and TimeGraph, a synthetic benchmark suite that evaluates causal discovery algorithms under realistic temporal challenges. My work aims to bridge theory and practice by producing interpretable, intervention-relevant causal models that support high-stakes decision systems.

As a Graduate Teaching Assistant at UMBC, I have supported courses such as Structured Systems Analysis and Design, Database Program Development, Advanced Database Project, and Management Information Systems. I emphasize hands-on learning, analytical thinking, and accessible instruction that prepares students for pathways in AI/ML, data science, and business analytics.

Education

Ph.D. in Information Systems

University of Maryland, Baltimore County (UMBC)

M.S. in Information Systems

University of Maryland, Baltimore County (UMBC)

B.Sc. in Statistics

University of Dhaka, Bangladesh

Interests

My work sits at the intersection of causal discovery, time series analysis, and machine learning. I focus on developing methods that can recover meaningful causal structure from autocorrelated and non-stationary multivariate time series—exactly the kind of data that appears in real-world systems.

Methodologically, I work on:

- Temporal causal discovery from complex, high-dimensional time series

- Decomposition-based approaches that separate trend, seasonal, and residual components (e.g., DCD)

- Attention-based and deep learning models (CDANs, eCDANs) for scalable structure learning

- Benchmarking frameworks (TimeGraph) to stress-test causal discovery algorithms under realistic temporal conditions

Application domains include:

- Healthcare, where robust causal structure can support early warning, treatment policy evaluation, and patient monitoring

- Climate and environmental systems, such as Arctic sea ice prediction and climate teleconnections

I am particularly interested in questions like:

- How can we make causal discovery robust to temporal dependence and non-stationarity?

- How should we evaluate causal graphs when the data are messy, irregular, and partially observed?

- How can causal structure be integrated into downstream decision-making and forecasting systems?

I am actively looking for collaborations at the interface of causal inference, time series, and real-world decision systems.

CDANs: Temporal Causal Discovery from Autocorrelated and Non-Stationary Time Series Data

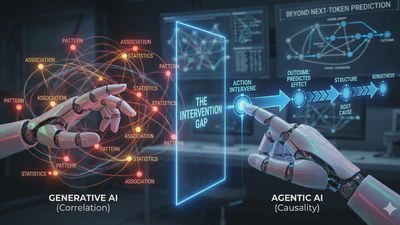

Beyond Next-Token Prediction: Why Agentic AI Needs Causal Guardrails

Exploring why the shift from Generative AI to Agentic AI requires a move from statistical correlation to causal reasoning.

Why Large Language Models Fail When the World Changes And Why Causality Is No Longer Optional

Why large language models break under distribution shift, how prediction differs from control, and why causality is essential for robust Agentic AI.